Geographic information system facts for kids

A geographic information system (GIS) consists of integrated computer hardware and software that store, manage, analyze, edit, output, and visualize geographic data. Much of this often happens within a spatial database, however, this is not essential to meet the definition of a GIS. In a broader sense, one may consider such a system also to include human users and support staff, procedures and workflows, the body of knowledge of relevant concepts and methods, and institutional organizations.

The uncounted plural, geographic information systems, also abbreviated GIS, is the most common term for the industry and profession concerned with these systems. It is roughly synonymous with geoinformatics. The academic discipline that studies these systems and their underlying geographic principles, may also be abbreviated as GIS, but the unambiguous GIScience is more common. GIScience is often considered a subdiscipline of geography within the branch of technical geography.

Geographic information systems are utilized in multiple technologies, processes, techniques and methods. They are attached to various operations and numerous applications, that relate to: engineering, planning, management, transport/logistics, insurance, telecommunications, and business. For this reason, GIS and location intelligence applications are at the foundation of location-enabled services, which rely on geographic analysis and visualization.

GIS provides the capability to relate previously unrelated information, through the use of location as the "key index variable". Locations and extents that are found in the Earth's spacetime are able to be recorded through the date and time of occurrence, along with x, y, and z coordinates; representing, longitude (x), latitude (y), and elevation (z). All Earth-based, spatial–temporal, location and extent references should be relatable to one another, and ultimately, to a "real" physical location or extent. This key characteristic of GIS has begun to open new avenues of scientific inquiry and studies.

Contents

History and development

While digital GIS dates to the mid-1960s, when Roger Tomlinson first coined the phrase "geographic information system", many of the geographic concepts and methods that GIS automates date back decades earlier.

One of the first known instances in which spatial analysis was used came from the field of epidemiology in the "Rapport sur la marche et les effets du choléra dans Paris et le département de la Seine" (1832). French geographer and cartographer, Charles Picquet created a map outlining the forty-eight Districts in Paris, using halftone color gradients, to provide a visual representation for the number of reported deaths due to cholera per every 1,000 inhabitants.

In 1854, John Snow, an epidemiologist and physician, was able to determine the source of a cholera outbreak in London through the use of spatial analysis. Snow achieved this through plotting the residence of each casualty on a map of the area, as well as the nearby water sources. Once these points were marked, he was able to identify the water source within the cluster that was responsible for the outbreak. This was one of the earliest successful uses of a geographic methodology in pinpointing the source of an outbreak in epidemiology. While the basic elements of topography and theme existed previously in cartography, Snow's map was unique due to his use of cartographic methods, not only to depict, but also to analyze clusters of geographically dependent phenomena.

The early 20th century saw the development of photozincography, which allowed maps to be split into layers, for example one layer for vegetation and another for water. This was particularly used for printing contours – drawing these was a labour-intensive task but having them on a separate layer meant they could be worked on without the other layers to confuse the draughtsman. This work was initially drawn on glass plates, but later plastic film was introduced, with the advantages of being lighter, using less storage space and being less brittle, among others. When all the layers were finished, they were combined into one image using a large process camera. Once color printing came in, the layers idea was also used for creating separate printing plates for each color. While the use of layers much later became one of the typical features of a contemporary GIS, the photographic process just described is not considered a GIS in itself -– as the maps were just images with no database to link them to.

Two additional developments are notable in the early days of GIS: Ian McHarg's publication "Design with Nature" and its map overlay method and the introduction of a street network into the U.S. Census Bureau's DIME (Dual Independent Map Encoding) system.

The first publication detailing the use of computers to facilitate cartography was written by Waldo Tobler in 1959. Further computer hardware development spurred by nuclear weapon research led to more widespread general-purpose computer "mapping" applications by the early 1960s.

In 1960 the world's first true operational GIS was developed in Ottawa, Ontario, Canada, by the federal Department of Forestry and Rural Development. Developed by Dr. Roger Tomlinson, it was called the Canada Geographic Information System (CGIS) and was used to store, analyze, and manipulate data collected for the Canada Land Inventory, an effort to determine the land capability for rural Canada by mapping information about soils, agriculture, recreation, wildlife, waterfowl, forestry and land use at a scale of 1:50,000. A rating classification factor was also added to permit analysis.

CGIS was an improvement over "computer mapping" applications as it provided capabilities for data storage, overlay, measurement, and digitizing/scanning. It supported a national coordinate system that spanned the continent, coded lines as arcs having a true embedded topology and it stored the attribute and locational information in separate files. As a result of this, Tomlinson has become known as the "father of GIS", particularly for his use of overlays in promoting the spatial analysis of convergent geographic data. CGIS lasted into the 1990s and built a large digital land resource database in Canada. It was developed as a mainframe-based system in support of federal and provincial resource planning and management. Its strength was continent-wide analysis of complex datasets. The CGIS was never available commercially.

In 1964 Howard T. Fisher formed the Laboratory for Computer Graphics and Spatial Analysis at the Harvard Graduate School of Design (LCGSA 1965–1991), where a number of important theoretical concepts in spatial data handling were developed, and which by the 1970s had distributed seminal software code and systems, such as SYMAP, GRID, and ODYSSEY, to universities, research centers and corporations worldwide. These programs were the first examples of general purpose GIS software that was not developed for a particular installation, and was very influential on future commercial software, such as Esri ARC/INFO, released in 1983.

By the late 1970s two public domain GIS systems (MOSS and GRASS GIS) were in development, and by the early 1980s, M&S Computing (later Intergraph) along with Bentley Systems Incorporated for the CAD platform, Environmental Systems Research Institute (ESRI), CARIS (Computer Aided Resource Information System), and ERDAS (Earth Resource Data Analysis System) emerged as commercial vendors of GIS software, successfully incorporating many of the CGIS features, combining the first generation approach to separation of spatial and attribute information with a second generation approach to organizing attribute data into database structures.

In 1986, Mapping Display and Analysis System (MIDAS), the first desktop GIS product was released for the DOS operating system. This was renamed in 1990 to MapInfo for Windows when it was ported to the Microsoft Windows platform. This began the process of moving GIS from the research department into the business environment.

By the end of the 20th century, the rapid growth in various systems had been consolidated and standardized on relatively few platforms and users were beginning to explore viewing GIS data over the Internet, requiring data format and transfer standards. More recently, a growing number of free, open-source GIS packages run on a range of operating systems and can be customized to perform specific tasks. The major trend of the 21st Century has been the integration of GIS capabilities with other Information technology and Internet infrastructure, such as relational databases, cloud computing, software as a service (SAAS), and mobile computing.

GIS software

The distinction must be made between a singular geographic information system, which is a single installation of software and data for a particular use, along with associated hardware, staff, and institutions (e.g., the GIS for a particular city government); and GIS software, a general-purpose application program that is intended to be used in many individual geographic information systems in a variety of application domains. Starting in the late 1970s, many software packages have been created specifically for GIS applications. Esri's ArcGIS, which includes ArcGIS Pro and the legacy software ArcMap, currently dominate the GIS Market. Other examples of GIS include Autodesk and MapInfo Professional and open source programs such as QGIS, GRASS GIS, MapGuide, and Hadoop-GIS. These and other desktop GIS applications include a full suite of capabilities for entering, managing, analyzing, and visualizing geographic data, and are designed to be used on their own.

Starting in the late 1990s with the emergence of the Internet, as computer network technology progressed, GIS infrastructure and data began to move to servers, providing another mechanism for providing GIS capabilities. This was facilitated by standalone software installed on a server, similar to other server software such as HTTP servers and relational database management systems, enabling clients to have access to GIS data and processing tools without having to install specialized desktop software. These networks are known as distributed GIS. This strategy has been extended through the Internet and development of cloud-based GIS platforms such as ArcGIS Online and GIS-specialized software as a service (SAAS). The use of the Internet to facilitate distributed GIS is known as Internet GIS.

An alternative approach is the integration of some or all of these capabilities into other software or information technology architectures. One example is a spatial extension to Object-relational database software, which defines a geometry datatype so that spatial data can be stored in relational tables, and extensions to SQL for spatial analysis operations such as overlay. Another example is the proliferation of geospatial libraries and application programming interfaces (e.g., GDAL, Leaflet, D3.js) that extend programming languages to enable the incorporation of GIS data and processing into custom software, including web mapping sites and location-based services in smartphones.

Geospatial data management

The core of any GIS is a database that contains representations of geographic phenomena, modeling their geometry (location and shape) and their properties or attributes. A GIS database may be stored in a variety of forms, such as a collection of separate data files or a single spatially-enabled relational database. Collecting and managing these data usually constitutes the bulk of the time and financial resources of a project, far more than other aspects such as analysis and mapping.

Aspects of geographic data

GIS uses spatio-temporal (space-time) location as the key index variable for all other information. Just as a relational database containing text or numbers can relate many different tables using common key index variables, GIS can relate otherwise unrelated information by using location as the key index variable. The key is the location and/or extent in space-time.

Any variable that can be located spatially, and increasingly also temporally, can be referenced using a GIS. Locations or extents in Earth space–time may be recorded as dates/times of occurrence, and x, y, and z coordinates representing, longitude, latitude, and elevation, respectively. These GIS coordinates may represent other quantified systems of temporo-spatial reference (for example, film frame number, stream gage station, highway mile-marker, surveyor benchmark, building address, street intersection, entrance gate, water depth sounding, POS or CAD drawing origin/units). Units applied to recorded temporal-spatial data can vary widely (even when using exactly the same data, see map projections), but all Earth-based spatial–temporal location and extent references should, ideally, be relatable to one another and ultimately to a "real" physical location or extent in space–time.

Related by accurate spatial information, an incredible variety of real-world and projected past or future data can be analyzed, interpreted and represented. This key characteristic of GIS has begun to open new avenues of scientific inquiry into behaviors and patterns of real-world information that previously had not been systematically correlated.

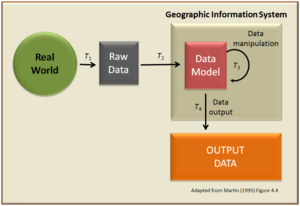

Data modeling

GIS data represents phenomena that exist in the real world, such as roads, land use, elevation, trees, waterways, and states. The most common types of phenomena that are represented in data can be divided into two conceptualizations: discrete objects (e.g., a house, a road) and continuous fields (e.g., rainfall amount or population density). Other types of geographic phenomena, such as events (e.g., location of World War II battles), processes (e.g., extent of suburbanization), and masses (e.g., types of soil in an area) are represented less commonly or indirectly, or are modeled in analysis procedures rather than data.

Traditionally, there are two broad methods used to store data in a GIS for both kinds of abstractions mapping references: raster images and vector. Points, lines, and polygons represent vector data of mapped location attribute references.

A new hybrid method of storing data is that of identifying point clouds, which combine three-dimensional points with RGB information at each point, returning a "3D color image". GIS thematic maps then are becoming more and more realistically visually descriptive of what they set out to show or determine.

Data acquisition

GIS data acquisition includes several methods for gathering spatial data into a GIS database, which can be grouped into three categories: primary data capture, the direct measurement phenomena in the field (e.g., remote sensing, the global positioning system); secondary data capture, the extraction of information from existing sources that are not in a GIS form, such as paper maps, through digitization; and data transfer, the copying of existing GIS data from external sources such as government agencies and private companies. All of these methods can consume significant time, finances, and other resources.

Primary data capture

Survey data can be directly entered into a GIS from digital data collection systems on survey instruments using a technique called coordinate geometry (COGO). Positions from a global navigation satellite system (GNSS) like Global Positioning System can also be collected and then imported into a GIS. A current trend in data collection gives users the ability to utilize field computers with the ability to edit live data using wireless connections or disconnected editing sessions. Current trend is to utilize applications available on smartphones and PDAs - Mobile GIS. This has been enhanced by the availability of low-cost mapping-grade GPS units with decimeter accuracy in real time. This eliminates the need to post process, import, and update the data in the office after fieldwork has been collected. This includes the ability to incorporate positions collected using a laser rangefinder. New technologies also allow users to create maps as well as analysis directly in the field, making projects more efficient and mapping more accurate.

Remotely sensed data also plays an important role in data collection and consist of sensors attached to a platform. Sensors include cameras, digital scanners and lidar, while platforms usually consist of aircraft and satellites. In England in the mid 1990s, hybrid kite/balloons called helikites first pioneered the use of compact airborne digital cameras as airborne geo-information systems. Aircraft measurement software, accurate to 0.4 mm was used to link the photographs and measure the ground. Helikites are inexpensive and gather more accurate data than aircraft. Helikites can be used over roads, railways and towns where unmanned aerial vehicles (UAVs) are banned.

Recently aerial data collection has become more accessible with miniature UAVs and drones. For example, the Aeryon Scout was used to map a 50-acre area with a ground sample distance of 1 inch (2.54 cm) in only 12 minutes.

The majority of digital data currently comes from photo interpretation of aerial photographs. Soft-copy workstations are used to digitize features directly from stereo pairs of digital photographs. These systems allow data to be captured in two and three dimensions, with elevations measured directly from a stereo pair using principles of photogrammetry. Analog aerial photos must be scanned before being entered into a soft-copy system, for high-quality digital cameras this step is skipped.

Satellite remote sensing provides another important source of spatial data. Here satellites use different sensor packages to passively measure the reflectance from parts of the electromagnetic spectrum or radio waves that were sent out from an active sensor such as radar. Remote sensing collects raster data that can be further processed using different bands to identify objects and classes of interest, such as land cover.

Secondary data capture

The most common method of data creation is digitization, where a hard copy map or survey plan is transferred into a digital medium through the use of a CAD program, and geo-referencing capabilities. With the wide availability of ortho-rectified imagery (from satellites, aircraft, Helikites and UAVs), heads-up digitizing is becoming the main avenue through which geographic data is extracted. Heads-up digitizing involves the tracing of geographic data directly on top of the aerial imagery instead of by the traditional method of tracing the geographic form on a separate digitizing tablet (heads-down digitizing). Heads-down digitizing, or manual digitizing, uses a special magnetic pen, or stylus, that feeds information into a computer to create an identical, digital map. Some tablets use a mouse-like tool, called a puck, instead of a stylus. The puck has a small window with cross-hairs which allows for greater precision and pinpointing map features. Though heads-up digitizing is more commonly used, heads-down digitizing is still useful for digitizing maps of poor quality.

Existing data printed on paper or PET film maps can be digitized or scanned to produce digital data. A digitizer produces vector data as an operator traces points, lines, and polygon boundaries from a map. Scanning a map results in raster data that could be further processed to produce vector data.

When data is captured, the user should consider if the data should be captured with either a relative accuracy or absolute accuracy, since this could not only influence how information will be interpreted but also the cost of data capture.

After entering data into a GIS, the data usually requires editing, to remove errors, or further processing. For vector data it must be made "topologically correct" before it can be used for some advanced analysis. For example, in a road network, lines must connect with nodes at an intersection. Errors such as undershoots and overshoots must also be removed. For scanned maps, blemishes on the source map may need to be removed from the resulting raster. For example, a fleck of dirt might connect two lines that should not be connected.

Projections, coordinate systems, and registration

The earth can be represented by various models, each of which may provide a different set of coordinates (e.g., latitude, longitude, elevation) for any given point on the Earth's surface. The simplest model is to assume the earth is a perfect sphere. As more measurements of the earth have accumulated, the models of the earth have become more sophisticated and more accurate. In fact, there are models called datums that apply to different areas of the earth to provide increased accuracy, like North American Datum of 1983 for U.S. measurements, and the World Geodetic System for worldwide measurements.

The latitude and longitude on a map made against a local datum may not be the same as one obtained from a GPS receiver. Converting coordinates from one datum to another requires a datum transformation such as a Helmert transformation, although in certain situations a simple translation may be sufficient.

In popular GIS software, data projected in latitude/longitude is often represented as a Geographic coordinate system. For example, data in latitude/longitude if the datum is the 'North American Datum of 1983' is denoted by 'GCS North American 1983'.

Data quality

While no digital model can be a perfect representation of the real world, it is important that GIS data be of a high quality. In keeping with the principle of homomorphism, the data must be close enough to reality so that the results of GIS procedures correctly correspond to the results of real world processes. This means that there is no single standard for data quality, because the necessary degree of quality depends on the scale and purpose of the tasks for which it is to be used. Several elements of data quality are important to GIS data:

- Accuracy

- The degree of similarity between a represented measurement and the actual value; conversely, error is the amount of difference between them. In GIS data, there is concern for accuracy in representations of location (positional accuracy), property (attribute accuracy), and time. For example, the US 2020 Census says that the population of Houston on April 1, 2020 was 2,304,580; if it was actually 2,310,674, this would be an error and thus a lack of attribute accuracy.

- Precision

- The degree of refinement in a represented value. In a quantitative property, this is the number of significant digits in the measured value. An imprecise value is vague or ambiguous, including a range of possible values. For example, if one were to say that the population of Houston on April 1, 2020 was "about 2.3 million," this statement would be imprecise, but likely accurate because the correct value (and many incorrect values) are included. As with accuracy, representations of location, property, and time can all be more or less precise. Resolution is a commonly used expression of positional precision, especially in raster data sets.

- Uncertainty

- A general acknowledgement of the presence of error and imprecision in geographic data. That is, it is a degree of general doubt, given that it is difficult to know exactly how much error is present in a data set, although some form of estimate may be attempted (a confidence interval being such an estimate of uncertainty). This is sometimes used as a collective term for all or most aspects of data quality.

- Vagueness or fuzziness

- The degree to which an aspect (location, property, or time) of a phenomenon is inherently imprecise, rather than the imprecision being in a measured value. For example, the spatial extent of the Houston metropolitan area is vague, as there are places on the outskirts of the city that are less connected to the central city (measured by activities such as commuting) than places that are closer. Mathematical tools such as fuzzy set theory are commonly used to manage vagueness in geographic data.

- Completeness

- The degree to which a data set represents all of the actual features that it purports to include. For example, if a layer of "roads in Houston" is missing some actual streets, it is incomplete.

- Currency

- The most recent point in time at which a data set claims to be an accurate representation of reality. This is a concern for the majority of GIS applications, which attempt to represent the world "at present," in which case older data is of lower quality.

- Consistency

- The degree to which the representations of the many phenomena in a data set correctly correspond with each other. Consistency in topological relationships between spatial objects is an especially important aspect of consistency. For example, if all of the lines in a street network were accidentally moved 10 meters to the East, they would be inaccurate but still consistent, because they would still properly connect at each intersection, and network analysis tools such as shortest path would still give correct results.

- Propagation of uncertainty

- The degree to which the quality of the results of Spatial analysis methods and other processing tools derives from the quality of input data. For example, interpolation is a common operation used in many ways in GIS; because it generates estimates of values between known measurements, the results will always be more precise, but less certain (as each estimate has an unknown amount of error).

GIS accuracy depends upon source data, and how it is encoded to be data referenced. Land surveyors have been able to provide a high level of positional accuracy utilizing the GPS-derived positions. High-resolution digital terrain and aerial imagery, powerful computers and Web technology are changing the quality, utility, and expectations of GIS to serve society on a grand scale, but nevertheless there are other source data that affect overall GIS accuracy like paper maps, though these may be of limited use in achieving the desired accuracy.

In developing a digital topographic database for a GIS, topographical maps are the main source, and aerial photography and satellite imagery are extra sources for collecting data and identifying attributes which can be mapped in layers over a location facsimile of scale. The scale of a map and geographical rendering area representation type, or map projection, are very important aspects since the information content depends mainly on the scale set and resulting locatability of the map's representations. In order to digitize a map, the map has to be checked within theoretical dimensions, then scanned into a raster format, and resulting raster data has to be given a theoretical dimension by a rubber sheeting/warping technology process known as georeferencing.

A quantitative analysis of maps brings accuracy issues into focus. The electronic and other equipment used to make measurements for GIS is far more precise than the machines of conventional map analysis. All geographical data are inherently inaccurate, and these inaccuracies will propagate through GIS operations in ways that are difficult to predict.

Raster-to-vector translation

Data restructuring can be performed by a GIS to convert data into different formats. For example, a GIS may be used to convert a satellite image map to a vector structure by generating lines around all cells with the same classification, while determining the cell spatial relationships, such as adjacency or inclusion.

More advanced data processing can occur with image processing, a technique developed in the late 1960s by NASA and the private sector to provide contrast enhancement, false color rendering and a variety of other techniques including use of two dimensional Fourier transforms. Since digital data is collected and stored in various ways, the two data sources may not be entirely compatible. So a GIS must be able to convert geographic data from one structure to another. In so doing, the implicit assumptions behind different ontologies and classifications require analysis. Object ontologies have gained increasing prominence as a consequence of object-oriented programming and sustained work by Barry Smith and co-workers.

Spatial ETL

Spatial ETL tools provide the data processing functionality of traditional extract, transform, load (ETL) software, but with a primary focus on the ability to manage spatial data. They provide GIS users with the ability to translate data between different standards and proprietary formats, whilst geometrically transforming the data en route. These tools can come in the form of add-ins to existing wider-purpose software such as spreadsheets.

Spatial analysis

GIS spatial analysis is a rapidly changing field, and GIS packages are increasingly including analytical tools as standard built-in facilities, as optional toolsets, as add-ins or 'analysts'. In many instances these are provided by the original software suppliers (commercial vendors or collaborative non commercial development teams), while in other cases facilities have been developed and are provided by third parties. Furthermore, many products offer software development kits (SDKs), programming languages and language support, scripting facilities and/or special interfaces for developing one's own analytical tools or variants. The increased availability has created a new dimension to business intelligence termed "spatial intelligence" which, when openly delivered via intranet, democratizes access to geographic and social network data. Geospatial intelligence, based on GIS spatial analysis, has also become a key element for security. GIS as a whole can be described as conversion to a vectorial representation or to any other digitisation process.

Geoprocessing is a GIS operation used to manipulate spatial data. A typical geoprocessing operation takes an input dataset, performs an operation on that dataset, and returns the result of the operation as an output dataset. Common geoprocessing operations include geographic feature overlay, feature selection and analysis, topology processing, raster processing, and data conversion. Geoprocessing allows for definition, management, and analysis of information used to form decisions.

See also

In Spanish: Sistema de información geográfica para niños

In Spanish: Sistema de información geográfica para niños